Research Areas

Physics of Shapes

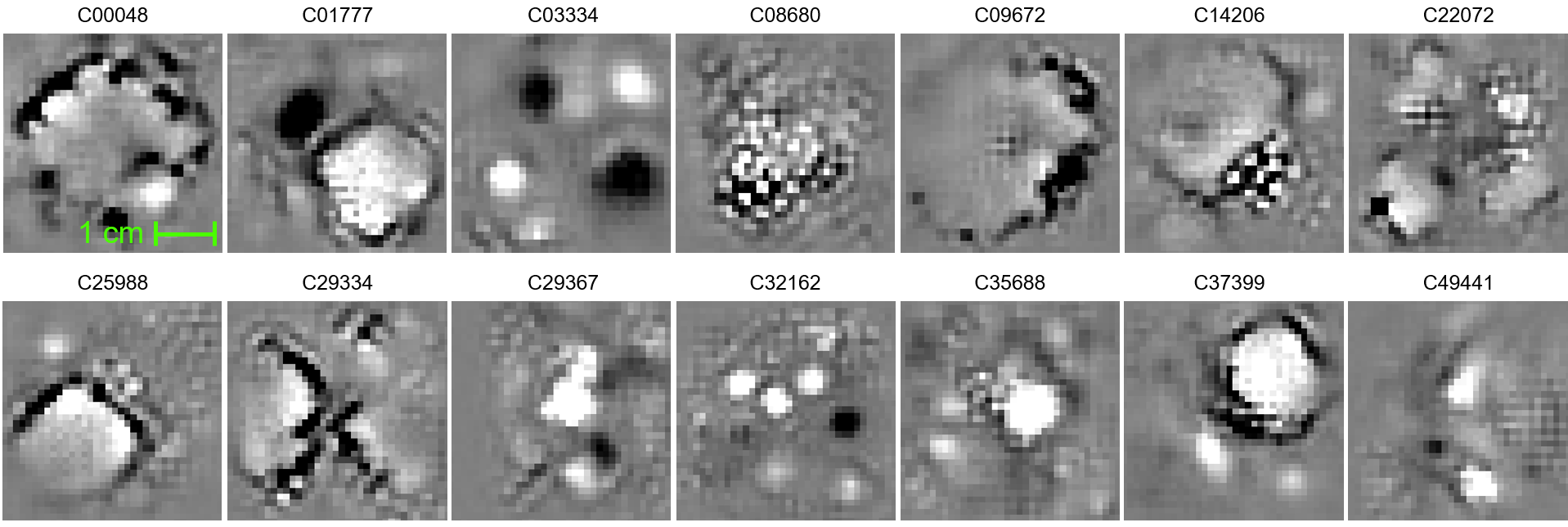

Shapes play a critical role in physics. Microstructural morphology determines the mechano-chemical behavior of a material; the aerodynamics of a vehicle is affected by the curves of the car silhouette; certain nano-scale surface texture patterns can make the surface repel or absorb liquids; just to list a few. In the Visual Intelligence Laboratory, we are interested in discovering linkages between shapes and various physical phenomena using the tools of computational differential geometry, computer vision, and machine learning.

Publications & Presentations

- Video Presentation: Physics Aware Recurrent Convolutional Neural Networks (PARC) - Phong Nguyen, SHOCK’22 Conference Presentation

-

Video Presentation: AI-assisted Microstructure Design of Energetic Materials - Joseph Choi, SHOCK’22 Conference Presentation

- List of Relevant Papers (Materials Science Applications)

- List of Relevant Papers (Design, Manufacturing, & Engineering Applications)

Websites & Other Resources

- MaterIQ Project Page: https://tinyurl.com/materiq

- National Science Foundation DMREF Project Page: https://dmref.org/projects/1559

Sponsored Projects

| DMREF: Physics-Informed Meta-Learning for Design of Complex Materials ($1.64M + $0.1M Supplement) | |

| Funding Source | National Science Foundation (NSF) |

| Period | 03/2022-02/2026 |

| Role | Principal Investigator |

| Description | The goal of this project is to develop meta-learning algorithms to extend machine learning models trained on one specific material system to other broader range of material systems (different species or length/time scales). |

| Investigating the Mechanisms of Particle Energization in Collisionless Heliospheric Shocks ($1.2M) | |

| Funding Source | National Aeronautics and Space Administration (NASA) |

| Period | 07/2020-06/2023 |

| Role | Co-Investigator (PI: Gregory Howes) |

| Description | The goal of this project is to understand the physics of collisionless heliospheric shocks by analyzing patterns from space experiments and simulations using convolutional neural networks. |

| Integrating multiscale modeling and experiments to develop a meso-informed predictive capability for explosives safety and performance ($7.5M) | |

| Funding Source | U.S. Air Force Office of Scientific Research Multidisciplinary University Research Initiative Program |

| Period | 06/2019-05/2024 |

| Role | Co-Investigator (PI: Thomas Sewell) |

| Description | The goal of this project is to develop machine learning capacities to predict the safety and performance characteristics of explosives. |

| Machine learning mesoscale structure-property-performance relationships of energetic materials for multiscale modeling of shock-induced detonation ($899,563) | |

| Funding Source | U.S. Air Force Office of Scientific Research |

| Period | 06/2019-05/2022 |

| Role | Co-Principal Investigator (PI: H.S. Udaykumar) |

| Description | In this project, we are looking to learn mesoscale structure-property-performance relationships of energetic materials, such as explosives, propellants, and fuels, using deep neural networks. |

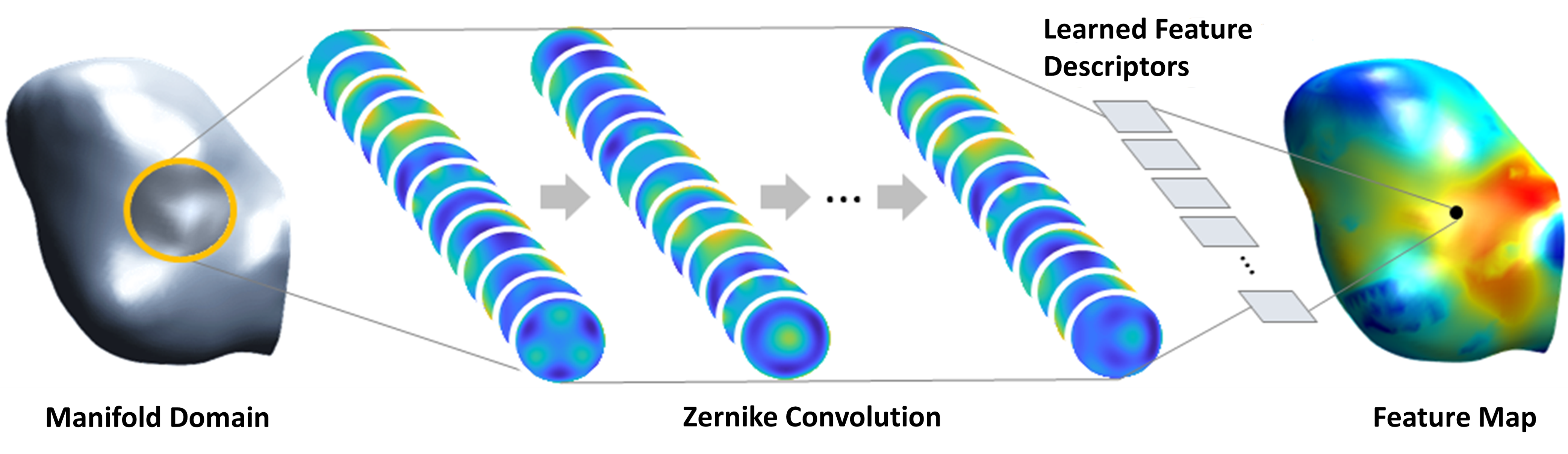

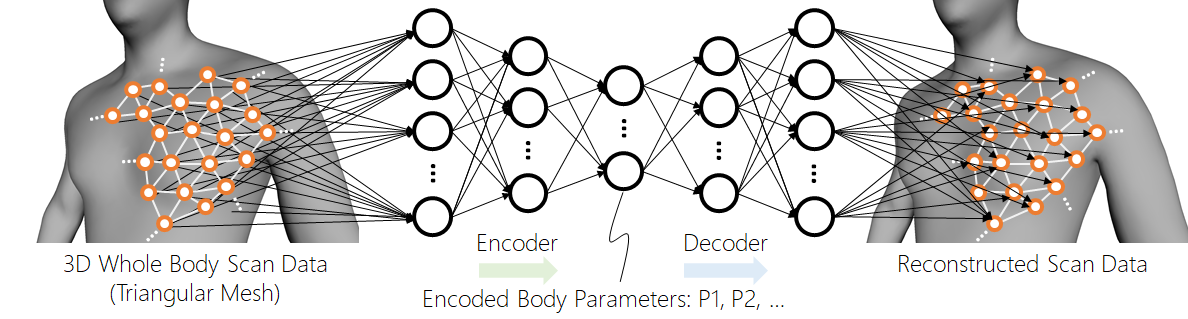

Geometric Deep Learning

Convolutional neural networks (CNN) have demonstrated unprecedented success in a variety of visual cognition tasks. Such a success, however, has been concentrated in mostly computer vision and image/signal processing applications, where one can enjoy a nice, grid-like structure of the data set (signal=1D grid of amplitudes, image=2D grid of pixel values, and such). Meanwhile, there is a large number of problems that could not benefit much from such powerful CNNs due to the non-Euclidean nature of the data set. For instance, graphical models, such as computer graphics objects or computer-aided design (CAD) parts, often exist as a boundary representation (B-rep) model in which the geometry of the model is represented by a thin, arbitrary-shaped boundary surface (2D manifold), rather than a grid-like representation. Hence, there is no canonical way of representing such data in a tensor format as expected by CNNs and, thus, the standard CNNs cannot analyze such data. Point clouds from 3D scanners or LiDAR systems, graphs from social network data, molecular structures of pharmaceutical compounds, protein foldings, and many other non-image-type geometric data fall into this category. At the Visual Intelligence Laboratory, we aim to develop mathematical foundations and scalable algorithms to expand CNNs to a variety of different geometric domains. Many scientific studies may benefit from such new capabilities, including computer-aided design and manufacturing (CAD/CAM), computer graphics, 3D sensing systems on autonomous vehicles, the brain mapping problem in neuroscience, and computational mechanics, just to list a few.

Websites & Other Resources

- Shape Matters: Evidence from Machine Learning on Body Shape-Income Relationship - Project Page

- ZerNet: Zernike Convolutional Neural Networks - Project Page

Digital Human Modeling

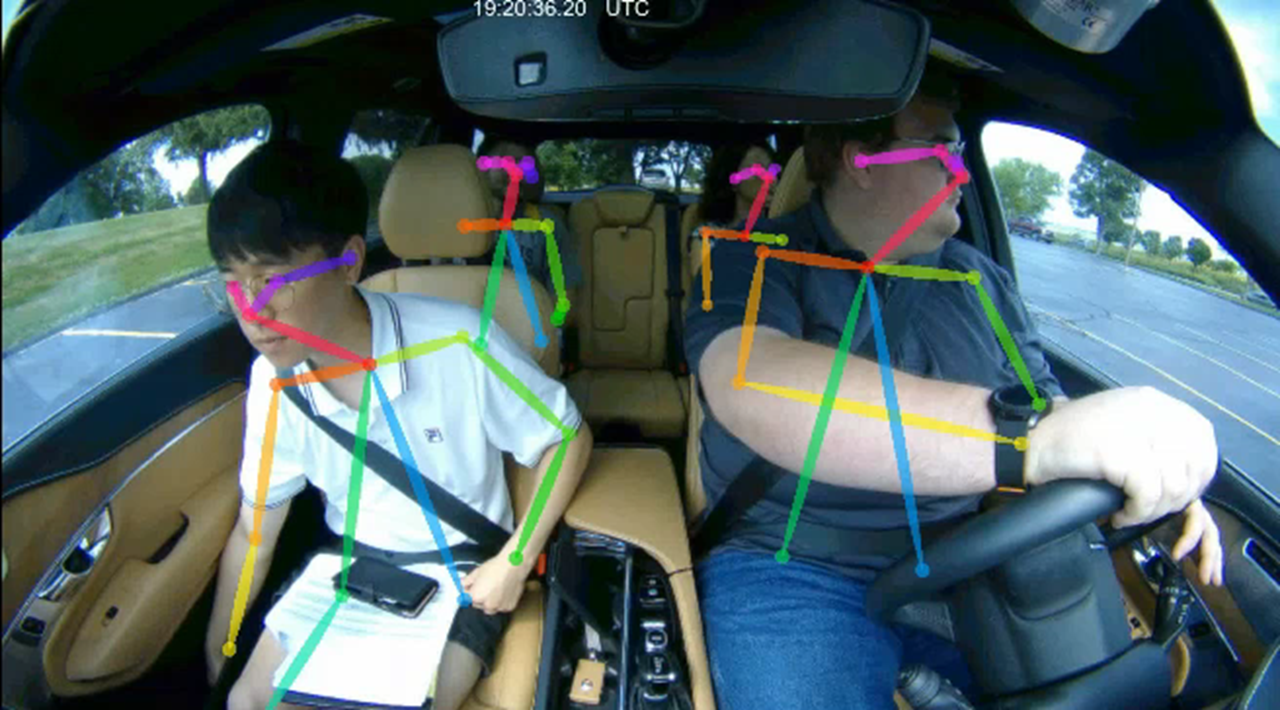

People produce a variety of visual cues both consciously and unconsciously. These visual cues include facial expressions, eye movements, body gestures, motion, and such. When provided with a context, we can sense the emotional and physical states of a person from these cues, such as joy, fatigue, anger, drowsiness, excitement, etc. For example, car companies these days are keen to integrate technology to detect such visual cues into their advanced driver assistance systems (ADAS) to enhance safety and convenience. For another example, many studies report that patterns of body motion are correlated and are thus indicative of the person’s performance. Furthermore, sometimes, subtle changes in such motion patterns can be a precursor to a musculoskeletal injury or a similar kind of ergonomic hazard. Therefore, accurate sensing and detection of such visual cues can become critical in many applications. The Visual Intelligence Laboratory is pioneering the research to detect and understand visual cues exhibited by people under different contexts such as driving, manufacturing, etc., by combining state-of-the-art computer vision technologies with their geometric data analysis expertise.

Sponsored Projects

| A study on user experience in autonomous driving scenarios ($221,398) | |

| Funding Source | Hyundai Motor Company |

| Period | 04/2019-01/2020 |

| Role | Principal Investigator |

| Driver State Detection via Deep Learning ($250,000) | |

| Funding Source | Aisin Technical Center of America |

| Period | 10/2017-03/2019 |

| Role | Principal Investigator |

| Developing Connected Simulation to Study Interactions between Drivers, Pedestrians, and Bicyclists ($1.8M) | |

| Funding Source | U.S. Department of Transportation Federal Highway Administration (FHWA) Exploratory Advanced Research Program |

| Period | 10/2017-09/2019 |

| Role | Co-Principal Investigator (PI: Daniel V. McGehee) |

| Description | The goal of this project is to develop virtual reality technologies for interactive simulation among subjects within different simulation environments to study road user behaviors for enhancing road safety. |

Medical Shape Analysis

By studying the shapes and textures of medical images, it is possible to detect disease and predict clinical outcomes more accurately. To this end, we are interested in characterizing the shapes and textures of diseases in medical images (X-rays, CT, PET, MRI, etc.) and correlating them with other clinical parameters.

Sponsored Projects

| Water and chloride movement in neurons during seizure activity ($2,122,253) | |

| Funding Source | National Institutes of Health |

| Period | 09/2020-06/2025 (Phase I) |

| Role | Co-Investigator (PI: Joseph Glykys) |

| Description | This project aims to study water and chloride movement in neurons during seizure and the corresponding changes of neuronal morphology using deep learning. |

| NSF Convergence Accelerator - Track D: ImagiQ: Asynchronous and Decentralized Federated Learning for Medical Imaging ($999,770) | |

| Funding Source | National Science Foundation |

| Period | 09/2020-05/2021 (Phase I) |

| Project Page | link |

| Role | Principal Investigator |

| Description | The goal of this project is to innovate medical imaging by collaborative model development and validation. To eliminate the hurdle of direct patient data sharing, we advocate a novel decentralized, asynchronous federated learning (FL) scheme. |